Growing up is a grim business. Whether it be your taxes, manager, mother, or therapist, the burden of responsibilities begin to weigh heavily in the fast pace of everyday life. The greatest tragedy of this human condition is the inevitable reality that encroaches upon us all: we will no longer have the time to play our beloved video games, which is a fate akin to death. Too often have I heard my fallen comrades utter the words “grow up” and lament that “there’s just no time anymore.”

Games, for the average person, become unfeasible time-sinks and a childhood hobby to be grown out of. Yet, this phenomenon is more complicated than the age-old misconception that games are inherently a waste of time through meaningless, empty pursuits of high scores and experience points. Even the best narratively-driven games demand 60-hour playthroughs with dozens of quests to complete, resulting in experiences that feel redundant and ultimately fruitless. The key issue at heart of this is that much of modern gameplay seems to waste the player’s time, because it fails to be narratively justified.

Endless places to go and people to meet. But to what end?

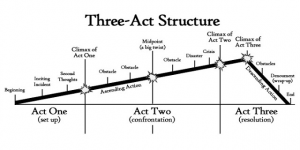

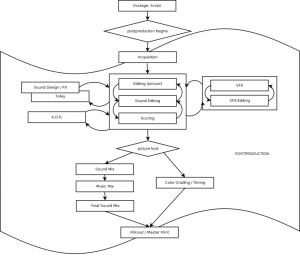

I have found endless satisfying and dense narrative experiences in films, gaming’s very close cousin. In film, directors work with editors to splice together a series of images to form a whole narrative for viewers to experience. It just so happens that, after over a century of experimentation with technique and the Three-Act Structure (a narrative model on how to construct plot and character arcs, pictured below), films have found a sweet spot of around 2 hours in which people may sit down and watch a whole narrative unfold in a single sitting. This has proven to be an incredibly convenient feature, and has helped to make narrative film the accessible and renowned medium it is today.

Filmmakers benefit from the power of editing, which allows them to fine-tune, frame-by-frame, the images that a viewer will see, which also grants them control over the length of the work. The reason why films are perhaps one of the shortest forms of narrative art is that conventional film editing philosophy demands that no time be wasted: every frame must contribute to the meaningful development of the film’s narrative.

The Three-Act Structure: a universal framework through which stories may be constructed, which does not seemingly require a large number of scenes or events.

In this paper, I develop a new concept that I call ludonarrative inertia, which refers to the moments of a game during which the gameplay does not contribute to the game’s narrative. After establishing a firm understanding of this concept, I explain how film editing principles could be adopted into narrative game design philosophy as a means to mitigate the risk of ludonarrative inertia. The ultimate purpose of this paper is both to illustrate ludonarrative inertia as a valid issue in narrative video games, as well as to propose a new design framework through which developers and gamers may identify and avoid this issue.

Why Narratives Matter in Games

Narratives in both video games and film can be split into the key components of story and character. Other elements of narrative may include setting and atmosphere, but story and character are the two most important when editing and designing gameplay. This is not to say that setting and atmosphere cannot be important narrative elements, but story and character should take foremost priority. Story and character are the essential backbone of narrative, with other elements such as setting and atmosphere serving the development of these key components. At the core of the narratives that impact us most, we universally cling first to a relatable protagonist (i.e. we invest in who they are and what motivates them) and their story (i.e. the events that bring them to closure). A memorable setting such as The Shining’s Overlook Hotel may indeed be a crucial part of the film, but it was ultimately a device through which the narrative could culminate in Jack Torrance’s (character) split from reality (story). When I speak of “story,” I am defining it as what the narrative is about in essence, including the overarching themes and emotional journey of these characters. Plot, on the other hand, consists of character actions and events in an arranged order. Plots can be manipulated and variably structured, while the story is the idea or an essence of these events in their entirety.

Uncharted 4 (top) and Gone Home (bottom) both have great narrative ambitions, but they diverge in their approaches to plot. And no, the bottom screenshot is not what Sully and Nathan are looking at in the top screenshot.

Films and video games can have endless amounts of plot, but might still lack a substantial story. Technically, a film in which a character walks between one room and the other endlessly could be an epic plot, with every subsequent scene depicting the next iteration of that character’s time in each room. The character might perform actions in each room, such as flipping a table or sitting on a chair for a moment, which still qualifies as plot. 5 hours may transpire, and you might leave with little to take away from that character’s “emotional journey”; further, nothing in that fictional world is meaningfully affected by that character’s actions. Such a film would have a plot, but no story.

Transformers: Age of Extinction (top) is bursting at the seams with considerable plot, but its story pales in comparison to the emotionally dense and character-heavy Moonlight (bottom), whose own plot is loose and exploratory.

Now, consider The Stanley Parable, but remove its narrator and every room in the office beyond the first few. Imagine walking between rooms in the office as the avatar: every player input is contributing to the video game’s plot. Yet, strip away the narrator and other elements of the level design, and the player is not able to engage with a larger story about frustration with monotony and obedience. This example shows that, like films, video games suffer from cases in which some of their content has more to do with plot than with story.

This leads to my point about characters as agents of plots and stories. They should somehow (1) change in their emotional or cognitive state—a chance typically referred to as a “character arc”—and (2) affect the plot in a way that contributes to story. A story based on characters cannot move forward without the characters performing actions. If the story is inherently about the characters’ journeys, then forward progression would stem from either changes to their emotional and cognitive states, or changes to their position in the overall story.

A dynamic, progressing narrative is therefore defined by the fundamental changes to characters and the stories that they shape. Star Wars, for example, is a compelling narrative because we remember how Luke Skywalker achieves his dream of going from naive and restless moisture farmer to successful fighter pilot, all the while working with his newfound friends to save the Galaxy from the dominion of the Empire.

The Stanley Parable (top) prompts players to forge their own destiny, demonstrating the player-avatar dynamic’s importance in creating an interactive plot and story. The formative decisions and emotional changes the player experience parallel those that the most compelling protagonists have had in film, such as Luke Skywalker in Star Wars (bottom).

Game developers are in a unique and challenging position: gameplay and narrative are often at odds in both the developer’s mind and the gamer’s mind. Gamers may pick up a game with the intention of purely enjoying the gameplay for its own sake, disregarding any semblance of narrative. Similarly, developers may devote all their time and effort into gameplay systems, leaving the narrative as an inconvenient necessity.

Yet even if these games do not have narrative ambitions, they contain essential narrative components that cannot be ignored. Games thrive off of robust settings, characters, and plot lines that form the main thrust of gameplay experiences. Otherwise, we may just be shooting people and jumping onto armadillos for no apparent reason other than to feel a rush of dopamine. The presence of these constituent narrative features commits the game to representing a narrative, regardless of whether or not that was the developer’s focus. So, to ignore a game’s—any game’s—capacity to tell a story would be to ignore a substantial part of the experience the game provides its players.

We have been victims of poor, inefficient video-game storytelling as if this were unavoidable. Overlooking poor storytelling because of the promise of compelling gameplay or the pitfall of authorial intent (i.e. claiming the developers simply were not concerned with telling a good story) ignores the game as a whole. If a game has characters and events, then it has a narrative. Therefore, a poorly constructed narrative, regardless of whether the developers intended it or not, is still a failure in game design that we ought not to tolerate.

For instance, recall how in the God of War series you would have so much fun killing mythological creatures, only to be forced to endure perfunctory story cutscenes, merely waiting for the game to finally allow you to kill the next harpy who looked at you the wrong way. In contrast, Final Fantasy XV may have featured a promising story, beautiful settings, and potentially rich characters, but your time is spent mindlessly collecting plants and killing crabs instead of meaningfully building on these narrative components. And, in the tragic case of Metal Gear Solid 4, players are forced to wait and watch as the narrative is explained to the player in 20-minute short films in between each level, and are then thrust into sandbox stealth levels, through which the only narrative accomplishment the player achieves is moving Snake from Point A to Point B.

Both Final Fantasy XV (top) and The Last of Us (bottom) feature a richly detailed world to play in, but it is the latter that actually has something to say about it.

Players have been conditioned to enter the worlds of video games with the mindset of either solely “playing” the game or solely “watching” the narrative unfold, scarcely every enjoying the pleasure of gameplay and narrative being intertwined. Too often must gamers experience gameplay without thinking about narrative, and narrative without thinking about gameplay. Instead of an ideal marriage between gameplay and narrative, gamers are left to endure ludonarrative inertia.

Horizon: Zero Dawn empowers you to fluidly dispatch of robot monsters in a beautiful world, which consequently engages the player to think more closely about prejudice and alienation. Wait, what?

What the Hell Does “Ludonarrative Inertia” Even Mean?

“Ludonarrative inertia” is a play on the recently popularized, critical term “ludonarrative dissonance,” which refers to contradictions that occur between a game’s narrative and its gameplay. This was first coined by Hocking (2007) when he criticized Bioshock’s narrative for not delivering on the theme of choice offered by its gameplay. Roughly, he argued that the gameplay appeared to promise players the ability to choose between two narrative paths—to help Atlas or not—but that the narrative broke that promise by ultimately forcing the player the help Atlas by the end.

Thus a new, pretentious term was born: ludonarrative dissonance. Since then, it’s been applied to a motley of other modern games, such as the Uncharted series. In Uncharted, the player virtually murders hundreds of mercenaries without a second thought, but is expected nonetheless expected to view their avatar, Nathan Drake, as a morally good, decent man. The gameplay characterizes Nathan Drake differently from its narrative, which creates ludonarrative dissonance. In other words, the gameplay appears to tell a different story than the one intended by the narrative.

Nathan Drake: mass murderer by day, fuqboi by night.

The valuable insight that I have taken from Hocking’s work is that the relationship between gameplay and narrative is dynamic, and should not be presumed to be consonant. Let’s call instances of compatible gameplay and narrative in video games instances of ludonarrative consonance, which is the counterpoint to ludonarrative dissonance.

With this dichotomy in mind, I had to conceive of a new, third term to describe my own plight: an itching feeling that certain instances of gameplay neither help to reinforce the narrative, nor directly contradict it—the kind of gameplay that just has no bearing on a game’s narrative one way or another. This kind of gameplay occupies a middle ground between consonance and dissonance, and the best term I could settle on was inertia, which means “a tendency to do nothing or to remain unchanged.”

I understand here that I am muddling states of physics here, but let us work under this paradigm in which we are referring to a video-game narrative as if it were a force in motion. Gameplay can help to move that narrative forward and build upon story and character in a meaningful way (consonance), or it can actually move the narrative backward by diminishing the stories and characters that the game has tried to establish (dissonance). Ludonarrative inertia refers to the gameplay that operates in a neutral middle ground: having no direct impact on the narrative, and thus being inert with respect to the narrative.

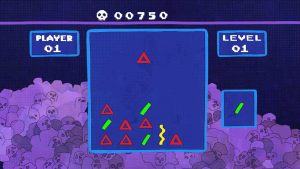

In a vivid and hilarious example of this very phenomenon in pop culture, Bojack Horseman features a satirical video game called Decapathon VII, which inherently suggests a game franchise that utilizes decapitation and violence in its gameplay. Its cover art features a burly, epic fantasy character holding a female sex cat slave in “Jabba the Hutt”-esque chains, ostensibly depicting the adolescent male fantasy of slaying and sleeping with feline beings. If the thought of this thematic premise sounds exciting, then you would be surprised to discover that the actual gameplay consists of nothing more than solving Bejeweled-style puzzles.

Clearly, there was something amiss in the fictive development of this game.

Decapathon VII in all of its shape-matching glory.

To see how ludonarrative inertia is an important and relevant concern for modern game design, it is important to understand the origin behind this ideological tug-of-war between gameplay and narrative. “Ludonarrative” refers to the synthesis of gameplay and narrative. This term was meant to reconcile the two disparate philosophies of ludology and narrativism. Suduiko (2017) best summarizes these arguments in detail, but these philosophies boil down to a purist debate between whether video games are inherently just gameplay systems (ludology) or just narratives (narrativism), and that the two are mutually exclusive. In other words, ludologists such as Bogost (2017) believe that video games are best understood as being interactive simulations of gameplay systems, and claim that narratives are better told in other mediums. Indeed, prominent game developers, such as John Carmack, have shown inclinations towards ludology as a game-design principle. We see this philosophy pretty clearly in Carmack’s Doom series —and through his personal statements, such as:

“Story in a game is like story in a porn movie. It’s expected to be there, but it’s not that important.” (John Carmack, circa 1993)

Here, Carmack frames storytelling in video games as more of a chore than a creative opportunity. To be fair, he said this decades ago, during a time when video games as a medium were still in their infancy. Carmack and his contemporaries had not had as much exposure to games that told compelling stories, let alone games that told great stories through gameplay.

On the side of the ludology/narrativism debate, narrativists argued that every video game tells a story, going to such lengths to claim that Tetris perfectly reflected the condition of overtasked Americans in the 1990’s (Janet Murray did that in her 1997 Hamlet on the Holodeck).

Both of these philosophies are reductive and ignore the reality that video games depend on both gameplay systems and narratives, and often feature successful cases where the two are harmoniously intertwined. This has given birth to a type of storytelling unique only to video games: a storytelling that builds upon player input and decision-making.

Sonic and Doom take place in vibrant worlds and feature seemingly unique narrative parts, but mostly only aspire to give you a rush of adrenaline and dopamine.

I do not blame ludologists and narrativists for thinking in such a binary, as developers practice design principles that do seem to prioritize either narrative or gameplay at any given moment. Some developers may focus on compelling gameplay systems without giving them a clear narrative purpose, while others may focus on designing narratives without connecting them to what the player is actually doing. Experiencing video games that seemingly focus on either solely gameplay or solely narrative will naturally skew us to think about games in terms of this binary.

Ludology and narrativism are useful frameworks for thinking at video game design because gameplay systems and narratives are often designed somewhat independently of one another and then mashed together, expected to somehow work together perfectly. In an recent case, Eidos Montreal’s Deux Ex: Human Revolution promised a narrative where players could choose to be completely lethal or non-lethal, and its gameplay, for the most part, delivered on that promise. The boss battles, however, forced players to kill these bosses in loud and explosive encounters that naturally failed to match the relatively more complex gameplay experience through the rest of the game. It should not be surprising to discover, then, that development of these boss battles was outsourced to a different studio—a studio that apparently had a different agenda.

Simply refer to the credits of any video game, and the game “writers” are rarely the people who worked as coders or designers on the gameplay systems. This is analogous to film production, where the film’s writer is almost always different from the cinematographer. The onus falls upon the creative director the best form a synthesis of the two, and those directors are not always successful.

To understand the game industry’s historical difficulty in synthesizing gameplay with narrative, remember that video games were originally designed as digitally interactive toys and playthings. From the ludologist perspective, Space Invaders presented players with an implied goal (to save the galaxy) and various obstacles that stood in the way of that goal (deadly aliens), and the experience was all about overcoming these obstacles to reach that goal. The appeal comes from the visceral pleasure of accomplishing a goal and overcoming a surmountable challenge, which results in both a biological and an emotional reward in the form of dopamine and pride, respectively. Games were conceived to present the perfectly designed, surmountable challenge—to be fun while testing a player’s skill— and consequently make players feel as though they have accomplished something noteworthy by the end of a level. This sensation of reward and fulfillment ultimately drives players to continue playing for the promise of even more gratification.

On the other hand, Space Invaders’ narrative is minimal, and acts as something like a “skin” to make this gameplay system more presentable and more fun to look at than, say, the geometric shapes of Decapathon VII. The minimal narrative imbues the gameplay with a story and characters for players to “invest” in, so that they may ultimately believe that they themselves are saving the galaxy from these invaders (hence, John Carmack’s porn comparison). In reality, no one cared about Space Invader’s narrative because this type of story had been better told directly through film and literature. Ask people why they enjoyed Space Invaders, and every response will be about their enjoyment of its gameplay systems, never its narrative.

This design principle—using narrative as a skin for gameplay—has sustained entire genres that have fundamentally changed very little, like fighting and racing games. Generally, these games do not have the intention of conveying a robust narrative. Their developers are mostly concerned with making the most combo-filled fighting game and the most realistic-feeling racing simulator since the last iteration of Street Fighter or Gran Turismo, respectively.

Arcade-based games such as those in the racing and fighting genres typically don’t aspire to give you narratives to write home about.

Then, after the age of Space Invaders, rendering actual character models in sprites and polygons became more viable, and developers realized that they could couch their video games in an overarching, legitimate narrative. The Final Fantasy role-playing game (RPG) series was at the forefront of every graphics generation (sprite, pixel, and polygon) as a video-game storytelling force, showing players that they could meaningfully engage in narratives through interactive gameplay.

However, there currently exists no established narrative grammar or standard for games to follow. Games are still experimenting with ways in which they prefer to present their narratives, as seen in games such as the “walking simulators” of Firewatch and Gone Home. One controversial video-game storytelling technique is the cinematic cutscene (cheating, if you ask me) that is utilized to separate and connect different sections of gameplay, and acts as a means to place all player actions in a narrative context. Some of my favorite games (and probably yours, too) have relied upon cutscenes to deliver at least some, if not most, of their narratives, while the gameplay typically acts as a fun aside to that narrative. There are some games that have been regarded in recent times as fusing gameplay and story together especially well—such as GTA V, Bloodborne, and The Last of Us—but it is difficult to point to any one in particular and claim that it should be the model for video-game storytelling. Gamers might claim that The Last of Us is too narratively driven, while The Legend of Zelda: Breath of the Wild is too systems-based. To this day, both gamers and developers do not seem to have a unified idea of how ludonarrative consonance can be consistently effected.

Both celebrated narrative games, GTA V (top) and Firewatch (bottom) engage video-game storytelling in drastically different themes and mechanics.

Cinemanarrative

Because ludonarrative consonance is a fairly recent and underutilized framework for examining video-game storytelling, it would be helpful to examine what we can learn from film in an effort to overcome ludonarrative inertia.

It just so happens that video games and film have formal similarities: just like games, films can suffer from an inert relationship between their mechanics and the narratives they intend to tell. We can call this cinemanarrative inertia: moments during which a film’s cinematography—its presentation of moving images—might superficially build upon the film’s plot, but fails to meaningfully contribute to the narrative—its character and story. This is like ludonarrative inertia: a video game’s gameplay—the collection of player inputs and decisions—might superficially build upon the game’s plot but fails to meaningfully contribute to the narrative—its character and story. Thus, both medium’s mechanics have the potential to fail in a storytelling capacity, but it is currently film that possesses the more formal and effective editing method of preventing narrative inertia.

Films and video games as forms of narrative art were born from the same DNA. They are both distinct from literature in that they are consumed through visual images. They are also inherently multimedia in their audiovisual hybridization, while video games add the element of interactivity into the mix. The fundamental difference between the two mediums is in their popular functions. Films are popularly understood to be a means of storytelling, whereas video games are popularly understood to be a means of interacting with fun and challenging gameplay systems. Video games might be able to tell a story, but their purpose for many gamers is still essentially to just deliver an entertaining game.

Yet, that does not mean that gamers should ignore gameplay’s ability to contribute to narratives. Instead, gamers should be excited by the thought of experiencing gameplay that goes beyond its original function to be fun and challenging, and dare developers to experiment with their craft—just as Jean Luc Godard once did during the French New Wave, a radical reaction to classical Hollywood conventions.

The French New Wave (top: Breathless) challenged Hollywood (bottom: The Maltese Falcon) by taking on new aesthetic values, such as real locations, handheld cameras, and natural sound.

Video games have much to learn from film. The two are relatively young visual mediums of art, both having been conceived in the 20th century. Film’s initial function, like photography, was as a way to document real life, or as a means to appreciate beautiful images. Then, some rebels picked up their cameras and decided to tell fake stories with it, and that was the seed that brought us Citizen Kane after decades of developing a cinematic language.

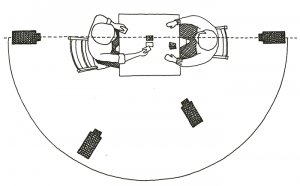

Developers and consumers can come to a better understanding of what makes for “good” video-game storytelling by taking a page from film’s playbook and establishing the kind of”formal language” of how stories work in video games. In film, a formal language is manifested in certain filmmaking “rules,” such as the 180 rule and the shot-reverse-shot technique when attempting to film a simple and logically-framed conversation between two people in any space. As a result, both filmmakers and viewers know on what terms and grounds they can formally critique and analyze a film. Of course, video games do not yet have as much of a well-established academic literature to draw from, so it makes sense to learn from film’s own model of narrative theory, as well as its established storytelling language.

The 180 rule (top) ensures the viewer can logically place the speakers in a conversation, with the policeman on the left in one shot, and the Dude on the right on the reverse shot (bottom).

The relationship in video games between gameplay and narrative is perfectly mirrored in the relationship between images and screenplay in film. This parallel was first drawn in a recent video essay by Youtube channel, Errant Signal, titled “The Debate that Never Took Place” (Youtube). Errant Signal used the term “cinemanarrative” to describe the relationship between film’s images (cinematography) and narrative (screenplay), which are determined by different people in the filmmaking process. In fact, the screenwriter is often completely left out of actual filming process. There are many cases in which cinematographers visually change the meaning of the screenwriter’s scenes and characters. Even though viewers would like to think of films as a perfect marriage of cinematogaphy and narrative, cinemanarrative dissonance (an analogue to ludonarrative dissonance, can often arise) in even high-budget films strictly controlled by studio agendas. This is seen in the Transformers series, where Michael Bay cinematographically objectifies, fetishizes, and demeans Megan Fox’s “Mikayla,” a character that was written to be a strong heroine (Folding Ideas, “Ludonarrative Dissonance,” 2017).

Mikayla is the scantily clad, always perfectly positioned mechanic, who happens to be into nerds. I guess dreams can come true!

Thus cinematography in film, like gameplay in video games, is a mechanic used to deliver and build upon the narrative through the presentation of telling images. Films employ this storytelling in units, from single images (shots) to entire scenes; games employ this storytelling in units, from specific player inputs (such as jumping over hills or clubbing a goblin) to entire quest/storylines. When a player engages in inputs and quests, they are continually experiencing and moving a collective narrative forward. Just as we view film narratives as a sequence of images, we may view video-game narratives as the player’s collective sequence of inputs and decisions. With this framework in mind, it should now be easy to see how examining the cinemanarrative relationship can help us better develop the ludonarrative relationship—and avoid the danger of ludonarrative inertia.

Film Editing

It is said that films are truly made in the editing room. Having edited two short narrative films myself, I can confidently say that the visual product of both my films came out to be substantially different from the written screenplay. This is partially due to my amateur mistakes while filming; however, the filmmaking process also taught me that what seems great on paper can be entirely different in visual practice. Editing allows a filmmaker to capture the true narrative at heart, and to only include images that contribute to either the story and/or the character, ruthlessly cutting any amount of plot that leaves the narrative inert.

Film editing is admirably rigorous, with tenets that could be applied to video games as well.

Case in point: the movie really is made in the editing room.

Now, the analogous process towards editing is less defined and obvious of a path for game developers. Film editors benefit from also being the simultaneous prospective viewer of that film. They need only watch the images themselves and gauge whether it delivers the emotional results they intend. Game developers cannot fully anticipate what a player might input or do in their games. So, beyond playing the games themselves, developers also hire playtesters to play the game and evaluate whether the systems themselves work without crashing. I should say, though, that I have not heard of a formal push or strategy for playtesters to assess whether games also deliver a compelling narrative, in the same way that studios screen films to focus groups for instant feedback during the editing process. Because film has been built upon a more established filmmaking language and technique, individuals who watch many films come to have a better sense of successful filmic storytelling. The same cannot be said for games, so individual playtesters may possess significantly different expectations for good video-game storytelling and report widely different opinions on their playtest of a game’s narrative. Playtesting for bugs, glitches, and ability to complete games naturally provides more concrete and actionable insights for developers to take. Therefore, it would be naturally easier for playtesting to be oriented towards mechanics and gameplay rather than toward narrative.

So, other than choosing which content to render and which tasks to actually give players, how else can game developers actually “edit” their games to ensure ludonarrative consonance and prevent ludonarrative inertia? How might developers ensure that gamers feel, for the majority of their playtime, that the gameplay in which they are engaging is continually contributing to the narrative?

I’d like to propose an answer to this question by considering Walter Murch’s editing principles outlined in his book on film editing, In the Blink of an Eye (Murch, 1992). Here, he presents general rules, such as “cutting out the bad bits” and depicting the “most with the least”—which would seem obvious, if not for the many video games and films that seem not to follow these rules.

Yet, the most interesting rule he outlines is the “Rule of Six”: a hierarchy of elements to prioritize when editing images together. They are the following:

- Emotion: 56%

- Story: 23%

- Rhythm: 10%

- Eye-trace: 7%

- Two-dimensional plane of screen: 5%

- Three-dimensional plane of action: 4%

Murch’s advice is to essentially edit images in order to preserve these 6 elements, but also to abide by their importance, as suggested by their assigned percentage. Therefore, in a scene, it is most important to preserve emotion and story; should the latter 4 elements be compromised to preserve these 2, then so be it: cut away. Other concerns, such as spatial or temporal continuity, are not as important as emotion and story.

Now, this Rule of Six does not translate to game design on a one-to-one basis, because elements such as the “two-dimensional plane of screen” and “three-dimensional plane of actions” are more specific to how a filmmaker frames the space of a scene. Video games do not necessarily need to be concerned with these elements because the player’s “camera” (i.e. their perspective on the game’s world) is often fixed and stable during real-time gameplay, typically controlled by the right analog stick or mouse. There is no editor forcing the player to cut between camera angles in the middle of gameplay: instead, the responsibilities of preserving spatial continuity are placed in the hands and thumbs of the player.

Woah. Bet you didn’t notice that.

However, there is a surprising insight in this hierarchy that suggests possible methods of subverting current game development conventions.

Murch lists spatial continuity and logic as the very last priority in film editing. Predictably, beginner filmmakers pay an inordinate amount of attention to preserving spatial continuity in their films. It feels necessary to make a film that maintains a spatial logic for the sake of internal consistency. Images with discrepancies and contradictions betray the illusion and suspension of disbelief, making viewers confused and jarred by sudden changes to the space they feel situated in. However, Murch’s logic is that, as long as the viewer is cognitively absorbed by images that are emotionally compelling with a coherent story, these continuity errors are not noticeable. No one cares about or notices technical inconsistencies when they are already emotionally engrossed in character and story.

This invites the question: are there any analogous elements to gameplay that developers wrongly assume will be especially important to the coherence of their narrative when designing gameplay?

Here is my completely made-up, provisional “Rule of Six” for gameplay design:

- Character: 25%

- Story: 25%

- Emotion: 20%

- Immersion: 20%

- Fun: 5%

- Challenge: 5%

This is admittedly more arbitrary than should be acceptable. However, I intend this gesture to symbolically challenge the popular thought that video games need to be both fun and challenging in order to be considered a video game. I’m drawing a direct parallel to the popular thought that spatial continuity is also important to a visual medium like film.

Murch insists that effective filmic media still, at its core, values story concerns over cinematographic concerns. Cinematographers and film crews may cringe at the thought of images being inappropriately hackneyed together by editors, ruining perfect compositions and beautiful spaces. But, at the end of the day, story is king. Critics and gamers have also often said that “gameplay is king,” which can be true in a sense—but I would to edit it a bit: “for single-player narratives, gameplay that meaningfully adds to story and character is king.”

Monster Hunter (top) and The Legend of Zelda: Breath of the Wild (bottom) both feature very similar gameplay mechanics, though Zelda more purposefully ties these actions to a larger narrative.

The thought that gameplay must always be fun and challenging is rooted in the old-school principles of game design that gave us the brutally challenging platforming levels of Megaman. In that tradition, the best gameplay systems provide the most optimized, surmountable challenge to overcome: challenging, but not too challenging to be impossible. The best gameplay, according to this tradition, forces players to work and practice to achieve these goals and feel a sense of accomplishment.

Megaman isn’t really about the avatar or why he’s trying to jump over flaming pits of lava: it’s about how fast you can jump over those flaming pits of lava!

This mindset has also led to the criticism of games such as Gone Home as not being “real” video games, though my previous paper on its open-house narrative explains that most of the game’s player inputs, together with its inherently puzzle-solving gameplay, consistently contributes to character and story. Gone Home not particularly fun, nor is it challenging in the same way that twitch-shooters or hardcore platformers require absolutely precise player inputs, but I still consider it to be an achievement of ludonarrative consonance. Every move you make as the avatar, Katie, reflects her desire to reconnect with her estranged family. This is achieved not by heavy-handed and blatant cutscenes or conversation sequences, but rather through the exploration of the family’s lived-in home, along with Katie’s tangible engagement with their belongings. The game turns puzzle and exploration gameplay into an experience that continually builds on story, character, and emotion. Perhaps the greatest achievement of Gone Home is its ability to deliver more narrative in its 2 hours than most games attempt in 20.

By now, I have already spoken to the importance of character and story in video games, and why gameplay should meaningfully add to these two components. Yet, I have also added two more categories, emotion and immersion, to my Rule of 6, at an almost equal level of importance. Emotion is included because I agree with Murch’s assertion that emotion is one of the reasons why visual art is compelling and why audiences feel the need to continue watching. Images and gameplay should continually provide an emotional reaction from the player, which leads them to think further about either the gameplay itself or the narrative. Think of the difference between Gone Home and a pay-to-win iPhone game such as Candy Crush that, as far as I am aware of, has no narrative ambitions. The gameplay mechanic of Candy Crush engages player “emotion” on the basis of challenge and reward, and not much else. On the other hand, Gone Home’s gameplay of exploration and puzzle-solving forces the player to confront the separation of the avatar’s family and listen to her sister’s struggles in adolescence. A player might feel sad, alienated, and lonely as they are forced to explore this cold, austere home in the middle of a dark and stormy night. There is real emotion there, which varies and changes as the game goes on.

Let’s redefine the victory screen beyond the gratification we feel when we finish a level in a mobile game. Perhaps the most rewarding narrative victory screen is akin to discovering a beautiful memento in Gone Home.

I added the category of “immersion” to the Rule of Six because I see it as a loosely defined, subjective quality that ties character, story, and emotion all together. It is difficult to concretely define, beyond calling it ‘the ability of a game to keep the player engaged in the video game narrative with continually unbroken attention, in the same way that films also aspire to keep the viewer engaged in their narrative with continually unbroken attention’. Such immersion might be composed of elements such as music, UI/HUD decisions, graphics quality, art design, or even voice acting, all of which contribute to the feeling that the player has escaped from their world to that of the game. The moment that a viewer or a player breaks from that immersion and becomes focused on something other than story, character, and/or emotion, the viewer/player has broken from the narrative and is instead consuming the work superficially. In the case of video games, the player is then completely consuming the work from a systems-based, gameplay mindset; in the case of film, the viewer is consuming it from a completely cinematographic mindset.

By now, you might have already thought up several counterexamples to my provisional Rule of Six: surely, you might be thinking, there must be games featuring gameplay that is incredibly fun and/or challenging, while also having rich narratives. You’re probably right about that. Yet, reconsider the gameplay once more and its impact on the top priorities of the Rule of Six: character, story, and emotion. In a game such as Bloodborne that is very gameplay-system heavy, it initially seems like its main appeal is challenging gameplay, the importance of which is minimized in my Rule of Six. Bloodborne’s challenge, however, also serves and is motivated by character, story, and emotion. The prospect of continually dying and enduring monumental challenges in the face of Lovecraftian horrors ingrains a sense of perseverance and foolhardy heroism that defines your sympathetic avatar. That the player continues to persevere, assuming he or she does not rage-quit forever, translates to the avatar’s continual cycle of poetically dying repeatedly until you ultimately reach what little achievements the game allows you. This challenge, depending on your interpretation, might state a larger commentary on the human spirit, and is optimistic about its ability to endure even in the face of sheer terror, futility, and death because the developers have still designed an attainable victory state. Lastly, the challenging gameplay forces the player into states of agitation and fear, with interspersed moments of relief.

While some could argue that Bloodborne has its inert or redundant moments, it provides an example of how the most important narrative elements gameplay mechanics must engage are story, character, and emotion, regardless of whether the gameplay is fun or challenging. Bloodborne is always challenging, rarely “fun,” but always narratively motivated.

Bloodborne’s dangerous, yet enchanting world is a character unto itself.

At the same time, however, games such as Alien: Isolation may deliver ludonarratively consonant gameplay that eventually becomes inert through redundancy and excess. In the case of Alien: Isolation, what started off as a tense and harrowing cat-and-mouse horror experience turned into 15 hours of too much of that very same mechanic in different environments. The gameplay initially begins by developing the avatar, Ripley, as the resourceful and empowered heroine facing off against the perfect organism—the very same narrative premise of Alien, its filmic inspiration. However, this repeating cycle of gameplay—escaping the alien’s clutches and pulling levers and switches—becomes ludonarratively inert when it is merely repeating what has already been repeatedly conveyed for hours on end, stretching out the narrative ambition of a 2-hour film to a grating 15-hour gameplay experience.

The fundamental difference between the Alien film and the Alien video game is that the editors of the film understood that every minute needed to add to the development of Ripley’s character arc, emotional thrills, and survival story, without being repetitive in a narratively unmotivated way. The developers of the game, in contrast, brazenly padded its narrative with redundant story and character moments to extend its playtime and appease the player demand for “longer” experiences worth their hard-earned dollars.

15. Hours. Of. This.

Whether through arbitrary tasks or necessary grinding, developers commonly design gameplay with the goal of keeping players busy, rather than moving the narrative forward.

Conclusion

Reconsider why we need to spend 60 hours playing Mass Effect: Andromeda when its narrative rewards pale in comparison to the brisk, 2-hour Jurassic Park. It is not necessarily the case that games like Andromeda are entirely ludonarratively inert, but they likely feature some content and quests that are. The reason why gamers are mindlessly playing through quests without a narrative thought, and why game conclusions can be so dissatisfying, is because gamers spend a good proportion of their playthrough engaging in inert gameplay. This results in hours spent without any meaningful developments in their character or their story. Gamers rarely even realize they are experiencing ludonarrative inertia, and are thus simply accepting games that waste their time.

In order to make games that adults can no longer outgrow, developers and their games need to outgrow traditional design principles. Experiment with different forms of gameplay, and continually test the gameplay’s relationship to the intended narrative. Sincerely edit, and reflect on whether this side quest or this core gameplay mechanic is actually respecting not just every hour, but every minute of a player’s valuable time. Enrich players with a meaningful narrative, even when they aren’t expecting it.

3 Comments

Shaman;Girls · October 25, 2017 at 9:57 pm

While it is true that many games present significant amounts what you call “ludonarrative inertia,” I do not believe ludonarrative inertia is inherently a negative concept. Consider your use of Monster Hunter as an example. It does have a very minor “single-player narrative,” but undeniably, its main draw is the gameplay. If we were to center a Monster Hunter game around story, it would become exactly like Soul Sacrifice, God Eater, or Freedom Wars. Fans would clear the story in 10-30 hours, and spend the next 100-500 on “side quests” that do not affect the story in any way. This is ludonarrative inertia that fans of the series want and expect. It offers replayability without replaying a story one has already experienced. When gamers have little time for entertainment, they do not necessarily need their time to be “narratively justified.” Some prefer “pick-up-and-play” games, that they can start up, play a few rounds/hunts/etc, and quit. I certainly do not want to be forced to drop an engaging narrative right before the climax, due to time constraints.

Ludonarrative inertia has its place, but I do believe that developers need to stop inserting lazy time-sinks such as open-world collectibles.

I am not saying a high quality story does not belong in any game. In fact, I’m sure most players would appreciate it, even if they did not ask for one. Unfortunately, the triple A video game industry seems to prioritize profit over artistic direction much more frequently than film. Why “outgrow traditional design principles” when millions will buy the newest, unoriginal copy of Call of Duty?

Richard Nguyen · October 26, 2017 at 4:25 pm

Thank you so much for taking the time to respond with such a thoughtful and measured response. It brings me joy to see this generating more discussion!

I agree with your counterpoints, as they reflect a very real mindset that a good proportion of gamers do hold: they do not play games primarily for the narrative. We anticipated that gamers who hold that philosophy, which is just as valid and understandable as the case where gamers do play games primarily for a narrative, might find that these arguments don’t necessarily apply to them. I also understand that this philosophy of play isn’t a dichotomy either, and that gamers may dynamically shift over a spectrum based on what they seek out of any particular game. I am personally very much a fan of games with very little narrative ambitions and find them simply fun, challenging, and rewarding to play and respect how mere systems-design can be executed very well.

It would stand to reason then why gamers are completely willing to accept ludonarrative inertia, because the narrative part isn’t an important consideration as to why they have come to play their games of choice. Yet, even if a narrative is neither an important priority for the developer nor the player, we in practice come across many games that are presenting narrative components (cutscenes, settings, stories, characters, plot lines etc.) that ultimately compose a larger, substantive narrative. Whether the developer intends upon their game to be actually “narrative-focused” or the player has come into this game willing and ready to care about a narrative, there is still technically a narrative being presented (whether players or developers like it or not). In reality, it’s also not quite as simple as developers being able to choose just not to “focus” on narrative, because games are pretty much expected to have stories even when their focus is the gameplay system.

To again refer to John Carmack’s quote: “Story in a game is like story in a porn movie. It’s expected to be there, but it’s not that important.” Here is a developer, perhaps like many others, who is expressing a likely frustration with the idea that they must wrap their very fun gameplay ideas around a story. Even if their goal is to ultimately create the most robust combat system or ship-building simulator, expectations of the larger market and industry practice pretty much mandate that some sort of narrative be presented, and it results in games where the narrative is very clearly secondary to the gameplay, which should theoretically be fine, as we addressed before. However, it in practice may lead to games where players must spend time dealing with narrative components that are so clearly secondary or they are poorly integrated into the gameplay (i.e. tacked on) that it just stands as an awkward feature of the game that I have to endure to get to the “good bits”. Why not, instead, think more about designing your robust gameplay systems to naturally develop the obligatory narrative itself, instead of just keeping them as separate things and having them awkwardly butt against each other, vying for the player’s attention? That way, gamers are treated to the gameplay they came in to experience, but also no longer have to sit through cut scenes or dialogue conversations they don’t find all that compelling or that really hold them from experiencing the game they want.

This is rampant across the most popular AAA games as well, such as in open-world games where a completionist might have to endure really poorly thought out side quests and story content. Take, for example, Diablo 3. In Diablo 3, there is very clearly a compelling action-RPG and loot-based gameplay system with a laughable story tacked on because it just has to be there. With the way players are meant to experience the game, players will have to play many many playthroughs of the “campaign” and every time you go through you must skip a good number of cutscenes and skip over poorly written exposition dumps before, during, and after every quest. And we can all pretty much tell that Blizzard was not making Diablo 3 to tell a compelling narrative, they just needed to have one there. Otherwise, we would just be hacking and slashing for absolutely no reason or context whatsoever. While, at the end of the day, players just had to press “ESC” a number of times and yawn as they go through this “story” part of the game, I want to argue that this ludonarrative inertia should not be acceptable and that gamers deserve more. When a piece of work presents itself as comprised of several aspects, the standard should be that all of them are good and work well together so that what you “do” and “play” is actually building on this obligatory larger narrative. Instead, we have games where gameplay is great, but I just skipped over/overlooked the story.

To summarize, I certainly think that ludonarrative inertia is not inherently negative and can have its place and that there will be gameplay that doesn’t at all build towards stories and characters. This paper is a discussion on when ludonarrative inertia becomes negative, and that negative ludonarrative inertia affects gamers more often than they perhaps realize. Regardless of developer or player intent, games are obligated to present some semblance of narrative and they in execution nearly always do present narrative components. The player has a choice of how they wish to approach this “narrative”: whether they want to seriously acknowledge it or ignore it completely. However, it is still a narrative they must objectively experience, even when subjectively they may suppress it from their appraisal of the game. That objective experience comes out to be the time and effort a player spends to ignore that narrative. And, really, should that standard be acceptable? Should players have to expect some mediocre parts to their games? With any product or work of art, one would ideally hold works to an ideal as close to “perfection” as possible. Yet, gamers have institutionally been conditioned to think of narratives in games as superfluous or typically bad even when they are expected to still have these narratives, whether it be mere table-setting or an important part of the game.

Would love to know your further thoughts! I know it’s a long winded response, and I hoped I addressed your thoughts in a relevant way haha.

Shaman;Girls · October 28, 2017 at 10:26 pm

Thank you for clarifying your perspective. I agree that creators of video games should not lower their standards in response to low expectations. I am somewhat of a pessimist, however, and do not think it is an issue the gaming industry will recover from any time soon. The popularity of low-quality, major titles is quite similar to the popularity of the iphone. They are overpriced, offer countless unnecessary add-ons, and have cheaper, comparable alternatives, but superior advertising and a large, established consumer base sustain its mainstream status.