Video games designers engineer worlds receptive to player input. Players are empowered with the agency to make decisions that can change the course of the game’s narrative and the characters within it. This decision-making is a core, interactive tenet of video games. In emulating the experience of choice and deliberation, there are various elements that designers must consider. Key among them is morality, or the principles humans hold to distinguish between “right” and “wrong” behavior, and how it influences player choice. The mechanics of moral decision-making across video games have been diverse, and only sometimes effective. In the time I have spent playing narrative games with morality as a central component and game mechanic, I have found that the games with the most minimal and least intrusive systems better emulate not only moral decision-making, but also the emotional consequences that follow. Presenting morality as its own discrete game mechanic is counter-intuitive, because it diminishes the emotional impact and self-evaluation of moral decision-making.

To begin, I will be applying a rudimentary framework of morality to fuel this discussion because the focus is not on morality proper, but on how it influences player choice. Video games that use the moral binary framework present to the player three possible moral courses of action: good, bad, and, sometimes, neutral. For our purposes, we will assume that the majority of players are good-natured, and believe in what society deems and teaches them to be “right” or “good.” At the very least, players understand what should be done. This includes, but is not limited to, altruism and cooperation. Good moral decisions often require self-sacrifice to achieve a greater good. Your avatar will sacrifice money for the emotional satisfaction of having donated to a virtual beggar. “Wrong” or “bad” behaviors, then, violate moral laws. Such behaviors include, but are not limited to, murder, lying, cheating, and stealing. Video games present morally “wrong” or “evil” choices as temptation, the desire to make the easier, selfish choice. Of course, life is not so simple as “right” and “wrong” or “good” and “bad.” To clarify, I will be using “good” and “right” to refer to the same concept, and will be using them interchangeably. The same applies to “bad” and “wrong”. The “neutral” alternative describes behaviors with no moral value, which is often presented as inaction in gaming scenarios. A flavored subset of the “neutral” choice is the “morally gray” choice, occupying a middle area between “good” and “bad” in which the moral value of an action is unclear. For instance, a typically “wrong” behavior, such as stealing, may be inflected with the “right” intention, such as stealing medicine in order to save your dying sister. In this situation, it is difficult to value the action as fully “good” or “bad”.

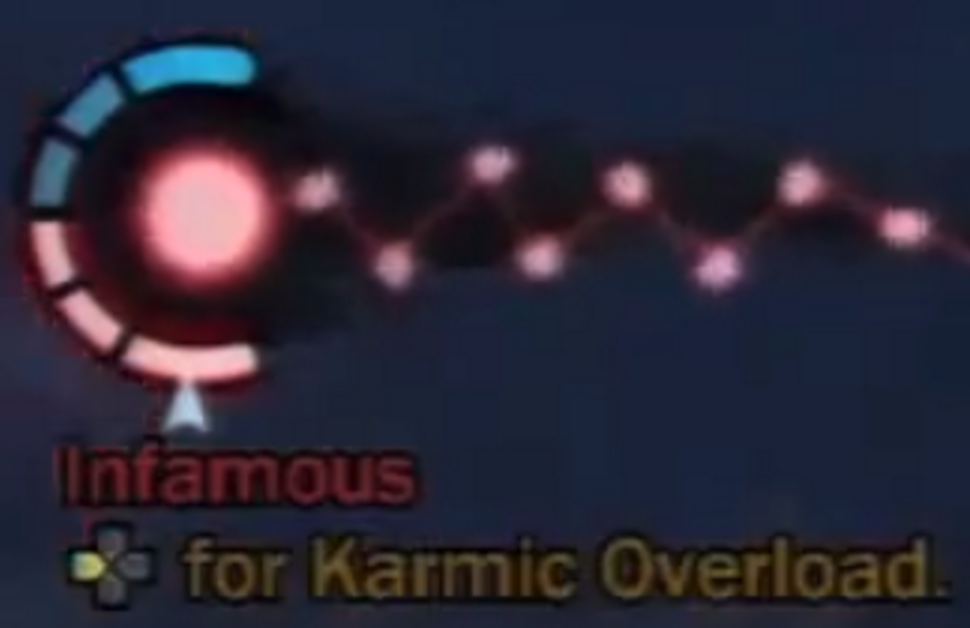

The moral binary of Infamous (discussed below).

I outline this moral theory under the assumption that players’ moral beliefs will extend to the decisions they make as the avatar in the game world. Of course, players often experiment with moral decision-making in games by “role-playing” the good or bad person, but such an action already makes players acknowledge their pre-existing moral beliefs. At this point, players become detached enough from the avatar, under the knowledge that the avatar’s actions do little to reflect their own moral selves, that they would care drastically less about the consequences of such actions. I will instead be examining the cases in which players seek to make decisions in games as if their avatars were a full extension of their moral selves. In other words, players make decisions as if their own moral selves were truly operating in this world. Therefore, players would care more about how their decisions accurately reflect their moral beliefs. Otherwise, there are little to no personal stakes involved in decisions when you know they say nothing about you.

Designers often abide by the convention that morally right decisions are selfless and performed for the greater good, while morally wrong decisions are selfish and performed for personal gain. Players that make the morally right decision often engage in the more difficult and complicated narrative pathway. For instance, choosing to ignore a mission directive in order to save an endangered life may lead to punishment, and requires the player to work harder make up for lost time or resources. In spite of the extra layer of difficulty, these morally right decisions are more emotionally rewarding because they preserve the player’s conscience. Again, we assume that the majority of players inherently abide by what society deems to be right and wrong. Players that make the morally wrong decisions engage in the more expedient pathway that facilitates direct personal gain. For instance, choosing to ignore endangered civilian lives in order to fulfill the mission directive leads to no direct punishments. Instead, the consequences of this morally “wrong” decision come through the emotions of guilt and disappointment due to its violation of the player’s conscience. This is not to say that players are discouraged from making morally “wrong” decisions in video games. Rather, having players choose either a “good” or “bad” decision places responsibility on their own hands, rather than the writer’s. Allowing players to explore the emotional consequences of both ends of the moral spectrum forces them to reevaluate their own beliefs. In the case of the moral binary in video games, such reevaluation turns into the reaffirmation of societal norms. Designers use this moral theory in decision-making to reinforce the conventional meaning of “right” and “wrong.”

Fallout‘s Good and Evil (discussed below).

The two primary elements of morality in a video game context are intention and behavior. The player’s intentions are enacted through the avatar’s in-game behavior. In other words, the decisions made in a video game are determined by player intention. The behavior can be objectively categorized into “right” and “wrong” according to the game’s narrative. However, the behavior carries with it the player’s intention, which cannot definitively be measured or categorized by the game itself. The player’s subjective experience is then the key factor in determining how well the video game emulates moral decision-making. What the avatar feels is independent of the player’s own feelings as a result of a moral decision. With the binary morality system, designers make a direct appeal to the player and his or her moral beliefs.

The psychological phenomenon of “cognitive dissonance,” where one’s conflicting and inconsistent behaviors and beliefs cause discomfort, drives the consequences of moral decision-making. This internal, emotional conflict compels a person to change one of those beliefs/behaviors in order to reduce such discomfort. When good-natured players make a morally “wrong” decision in a videogame, their beliefs will be inconsistent with their behavior. Even if the player unwittingly or does not believe that they made a morally “wrong” decision, the game’s systems will still punish and treat them as if they did. For example, a person playing Grand Theft Auto 5 may fire a gun in public and not believe that it is wrong or against the law. The game’s systems, in the form of police, will nevertheless respond negatively. The player is left to reconcile his moral beliefs with those of the video game. There are three likely responses when a good-natured person (as we assume the majority of us are) makes a morally wrong decision: (1) change your beliefs to be more consistent with your behavior, (2) live with and accept the discomfort and inconsistency, or (3) sublimate, and find a reason or rationale to justify your inconsistency. The idea is that cognitive dissonance creates the emotion of discomfort. The first two options are labeled as truer dissonance scenarios because they are done in response to such discomfort. Option (3), on the other hand, precludes discomfort because the sublimation will have already taken place due to a third-party influence. Thus, players are not made aware of the inconsistency and continues, unaffected by their moral decision. From my experience, the most effective moral systems have compelled me to respond with Options (1) and (2), which most align with realistic moral decision-making and the phenomenon of cognitive dissonance. By provoking the visceral discomfort of making a decision you realize was inconsistent with your beliefs, you will ostensibly be more compelled to respond. When video games inspire Option (3), sublimation, the player transfers responsibility to a third party and is therefore relieved of any personal, emotional consequence. Sublimation allows players to rationalize or provide an external explanation for their behavior. Therefore, responsibility for that moral decision is displaced, which mitigates any true feelings of cognitive dissonance. This is not to say that Option (3) never occurs in realistic moral decision-making. I am arguing that the modern video game most often counter-intuitively facilitates this transfer of responsibility, even when their goal is to appeal to or challenge a player’s moral beliefs through cognitive dissonance.

Pictured: Leon Festinger’s Cognitive Dissonance Model, with three possible actions (in green) that a subject could implement to reduce cognitive dissonance.

Now that I have clarified both my moral framework and the role cognitive dissonance plays in moral decision-making, I will analyze how these work in popular video games that use the moral binary framework. I will examine its role and evolution in several narrative-driven open-world and role-playing games. We will start from the simplest, most direct binary systems and work our way into games that add eschew the binary for more minimalist approaches.

In the Infamous series, the player must decide whether his avatar (Cole), a super-being with electric-based powers, will be a “hero” (good) or a “villain” (bad). In order to secure the most successful playthrough, in which the player unlocks the strongest abilities and completes the narrative, players must commit to one moral path and constantly commit the deeds that earn them either good or bad karma points. Each path provides unique abilities inaccessible in the other, incentivizing commitment to one moral path rather than neutrality. As a result, players have access to only two viable playthroughs of the same story. The hero playthrough facilitates a precise and focused combat play style while keeping your electricity blue, and the villain playthrough facilitates a chaotic and destructive combat playstyle while turning your electricity red. In order to earn karma points, the player must constantly engage in activities consistent with the respective path, as demarcated by the video game itself. Good karma points are earned by helping citizens and choosing the good prompt instead of the bad during pivotal story events. Bad karma points are earned by destroying the city, murdering citizens, and choosing the bad prompt instead of the good during pivotal story events. There are no neutral or morally grey options. A player’s karma meter is plastered on the heads-up display (“HUD”) to remind the player that their actions are omnisciently tracked and scored, essentially turning morality into its own mini-game.

Karmic decisions in Infamous 2.

In spite of its blatant tracking and systematic reminders, Infamous’s binary morality system is comically shallow and ineffective in producing realistic emotional consequences. The game reduces moral decision-making to a binary, because it can only be completed upon fulfillment of either the hero or villain pathways. The narrative makes its morality clear in that heroes are “good” and villains are “bad.” For the ordinary player, the only choice then is to consider whether they want to be consistent with their own good-natured beliefs and choose the hero path, or to deviate from the norm and explore moral violations as a villain. Aside from the joys of blowing everything up, choosing the villain’s path should then inspire some amount of discomfort, which should consequently lead to either (1) a change in player attitude to coincide with the behavior, (2) an acceptance of the discomfort, or (3) sublimation. The game’s blatant morality system in all cases inspires sublimation, and therefore fails to provoke any genuine cognitive dissonance within the player for several reasons.

Karmic choice in Infamous Second Son.

First of all, Infamous’s blatant tracking turns morality into a purposeful meta-game to be conquered. Therefore, the goal to reach the highest karma levels is extrinsically motivated by in-game rewards such as unlockable abilities, rather than intrinsically motivated by the game’s narrative. The sheer volume of moral decisions the player makes as Cole are driven not by how the player would act, but by what moral pathway the player committed to at the very beginning. This allows for little moral experimentation on a case-by-case basis, as the player’s goal is to globally make either good or bad decisions.

Karma farming in Infamous 2.

Second, the game’s design makes it so that skill progression is tied to fully achieving full hero or villain status. This makes it difficult to completely finish the game if the player does not commit to a moral pathway. Thus, game designers are obligated to provide players with the opportunity to “farm” karma points, in the case that they have poorly leveraged the karma system, to advance in power. Scattering redundant and bountiful opportunities to advance in karma level throughout the city diminishes the emotional impact of each moral decision. For example, there will be countless civilians on the street whom you can either choose to revive (good) or bio-leech for energy (bad). This becomes mundane because (1) you have already made the same decision countless times before and (2) you do not have a choice because your decision has already been made based on your playthrough. Infamous presents morality as a game mechanic with clear, delineated consequences. Both pathways end in earning more powerful abilities. By asking the player to virtually choose a side at the beginning of the playthrough, no further thought or questioning is required because the player no longer feels any responsibility for their actions. Once players lose a sense of responsibility for their and their avatar’s actions, it is easier to dissociate themselves from moral acts that the avatar has performed. The game itself tracks and quantifies the player’s moral choices and produces a predictable response every time. Any cognitive dissonance is displaced by how the game virtually forces the player to commit to a single moral pathway in order to succeed. In games like Infamous, we submit to the game’s predetermined, simplistic morality, and are given no chance to evaluate such decisions based on our own moral beliefs.

The Karma Meter in Infamous.

Granted, no one has ever expected Infamous’s binary morality system to be the paragon of moral decision-making in video games or for it to change anyone’s moral code. Yet, it is clear that binary morality systems have become the rule, not the exception to exploring morality in video games. For example, high-profile and critically acclaimed narrative games such as BioShock, the Mass Effect trilogy, and even the Fallout series all abide by similar moral mechanics.

BioShock‘s harvest-or-rescue binary.

In BioShock, the ending changes based on the player’s decisions about how to deal with its Little Sisters. The binary morality is as follows: save the sister (good) or harvest her (bad). Harvesting a sister will kill her in order to drain her life force and reap more economic benefits. The moral dimension of this decision lies in determining the fate of this narrative entity, in choosing whether or not to kill the sister. The good and right choice is to save the sister and restore her life, which provides less Adam (in-game currency) immediately but is rewarded with gifts of gratitude later on. One of the game’s central figures, Tenenbaum, explicitly denotes this to be the narratively good moral choice, especially when the most optimistic and humanist ending can only be achieved upon saving all of the sisters in Rapture. It is only in this ending where the Sisters help the avatar escape from Rapture. The cutscene, saccharine and hopeful, is accompanied by Tenenbaum’s affirmation of the player’s “good” morality. The morally bad and “wrong” choice is to harvest the sisters and essentially take their life to receive more Adam immediately but with no long-term reward. The bad ending (accompanied by Tenenbaum’s extremely bitter and dismissive monologue if the player harvests all the sisters) depict the avatar’s brutal and power-hungry takeover of Rapture’s remains, and the splicer’s savage invasion of the world above the surface.

The narrative makes evident, through Tenenbaum’s insistence upon humanity and these dichotomous endings, that there is a clear moral binary between good and bad. Yet, by tying the moral decisions concerning the fate of these sisters to directly economic, rather than purely emotional consequences, the game pollutes any potential moments of cognitive dissonance as a result of the morally “wrong” decision. What is initially posited as a measure of the player’s moral values is transformed into an exercise in economic impulsivity: whether or not players can delay immediate gratification for longer-term rewards. This is not to say that moral decisions can never be tied to economic consequences. Choosing between stealing or donating money holds unpredictable consequences and punishments, and one can get away with morally bad economic decisions while feeling internal guilt. For BioShock, however, the endings clearly attempt to evoke emotional consequences, particularly through Tenenbaum’s shaming of the player in the bad endings with no further reference to economic rewards. The experience of cognitive dissonance would be where the morally “bad” player either (1) changes their beliefs to be more consistent with their actions (believing that they were inherently justified in or truly wanted to harvest the sisters) or (2) accepts their actions as bad and lives with the shame of having murdered little children.

Thus, it seems as though the added economic layer of Adam rewards in moral decision-making was done more out of convenience, a way to give the player Adam instead of inspiring a moral quandary. By the end of the game, players may place responsibility on economic motivations, rather than personal or internal motivations, as the driving force behind their decisions. Moral responsibility is displaced by the justifications of either achieving a certain ending cutscene or by maximizing economic gain. As a result, the player experiences no dissonance because their “bad” actions are believed to be consistent not with their moral beliefs, but rather with this other economic motivation. While BioShock does a better job of posing a more complicated moral situation than the simple choice of “being a hero” versus “being a dick,” it instead settles with the economic quandary of choosing between “being a rich hero” versus “being an impoverished dick.”

While I adore the Mass Effect trilogy, I would be foolish to believe that people did not already determine to pursue a full “paragon” (good) or “renegade” (bad) playthrough within the first ten minutes. Paragon choices most often involve dealing through compassion, non-violence, and patience, whereas renegade choices are aggressive, violent, and intimidating. Narratively, paragon decisions are framed as heroic, which is met by an NPC’s openness and friendliness. On the other hand, renegade decisions are framed as apathetic and ruthless, met with an NPC’s fear and disapproval. The game’s feedback loop then reinforces the idea that paragon is conventionally good, and renegade is conventionally bad. The entire morality mechanic in this game revolves around the choices made in conversation. In fact, the game’s dynamics conversation wheel facilitates moral decision-making without the player even having to look at the dialogue options:, the upper right and left segments of the wheel are paragon choices and the lower right and left segments are renegade choices. The right middle section is reserved for neutral options, but is not a viable option for those looking to maximize their moral decision-making output. While being neutral is, in and of itself, a moral decision, the game grants little to no narrative benefits to doing so, and players are positioned to either progress to full paragon or renegade status.

A representation of the six choices on Mass Effect‘s conversation wheel.

Players can practically play and achieve full paragon or renegade status without even reading or thinking about the dialogue options they choose. At this point, players have broken the moral binary system, because the player action no longer directly reflects their beliefs, eliminating the possibility for cognitive dissonance and genuine moral quandaries.

Mass Effect‘s conversation wheel in-game. Note the color-coding for paragon and renegade choices.

Mass Effect nearly transforms moral decision-making into an automatic, thoughtless process. Instead of playing as how you deem to be the appropriate moral choice to make in different contexts, your morality is globally predetermined by the type of playthrough you wish to achieve. There are incentives and narrative rewards for committing to either paragon or renegade, and nothing is gained by choosing neutral dialogue options. For instance, Commander Shepard begins as a neutral personality to fit the player, and is strongly characterized by moral decisions the player makes at the dialogue wheel.

Another in-game conversation.

There is even a meter that tracks how good and bad your Shepard is on a moral spectrum. You start in the neutral gray zone in the middle, and “progression” is achieved whenever your tracker moves towards paragon’s blue side or renegade’s red side. As a player, morally

wrong acts can then be justified by playing by the game’s moral rules, and not their own. By turning morality into a game in and of itself, you undercut any emotional consequences these decisions may have on the player.

Progress along Mass Effect’s moral tracker.

The Fallout series has done well in both perpetuating and addressing the problematic moral binary in video games. In Fallout 3, your behaviors are omnisciently tracked and marked under a karma score, distinguishing the both the player’s and the avatar’s actions as good or evil.

Fallout‘s Karma Indicator.

Good choices include granting charity to survivors in the wasteland, while evil choices include stealing, even when no one is looking and even if the object were but a mere paper clip. Again, this is another example of an unrealistic moral scenario, in which every time you steal a paper clip you receive a notification and unpleasant screech denoting that you have lost karma. It is almost as though I avoid making evil choices, not to avoid guilt or to save my karma score, but primarily to avoid that unpleasant screech.

Fallout‘s Karma Indicator again.

Here is yet another case in which the game’s progression system rewards committing to one moral side, and every decision you make is under scrutiny and is met with predictable consequences. Upon learning that the only penalty to pay for stealing is a bit of on-screen text and a screech, why not just steal everything when no one is looking? Any guilt you might feel regularly is diminished by the reminder that this morality system is but a meta-game that can be exploited to increase your karma level by repeatedly donating caps to any schmuck in the wasteland. Fallout New Vegas takes measures to address this issue by incentivizing players to maintain a morally neutral playthrough via dedicated and rewarding perks for neutrality.

The good-and-evil point system of Fallout 3.

However, there still lies an issue in the blatant “gaminess” of its morality systems, where players feel as though their moral decisions are motivated extrinsically rather than intrinsically. In this case, players feel the need to satisfy the game’s expectation to commit to one of two (or, for New Vegas, three) moral pathways because of the various benefits/perks that come with such a playthrough. Not only that, but the Fallout games also fail to imbue narrative consequences to a player’s morality. For the sake of preserving this open-world game’s consistency across playthroughs, the narrative is largely unaffected by player’s moral decisions. NPCs respond equally to “bad” and “good” avatars. The game’s primary response to moral decisions is merely mechanical, by the omniscient tracking meter and consequent on-screen notification of when a player has committed a moral decision. The drastic disconnect between the player’s moral decisions and the game world’s frigid indifference to such moral actions inspires little questioning or thought. Players, knowing that their actions have minimal consequence, place moral responsibility upon the game’s system rather than themselves and their own moral beliefs. By the end, the experience has boiled down to accommodating the game’s own defined sense of morality instead of exploring your own beliefs.

Fallout: New Vegas’s Karma Tracker.

However, not all hope is lost! Some games come closer to emulating the experience of moral decision-making. Telltale’s The Walking Dead series remarkably captures the insecurity, spontaneity, and unpredictability that often comes with moral decision-making.

The Walking Dead’s ambiguous, unpredictable choices.

Throughout the game’s interactive cutscenes, there are often timed decisions players must make between four options. The player never knows which decisions are tracked, nor what consequences they might have, whether short-term or long-term. The only indicator players receive are a line of text that denotes “[insert character name here] will remember that.” Even in that statement, the impact is ambiguous, and the player is left to discern whether they made a good or bad decision according to their own morality, rather than that of the game’s narrative. Mechanically, The Walking Dead presents no explicit menu or HUD tracker for the player’s morality level, provides little-to-no feedback on these decisions’ narrative/gameplay impacts, and inflicts unpredictable consequences.

Clementine’s ambiguous response to your choice.

By contrast, the games mentioned above explicitly posited their own binary moral system: firm rules that the player must play by. In addition, the games above predictably provided information and definitive feedback to these moral decisions, lessening their emotional impact in the long run. Players, once made cognizant of the extrinsic forces that may be guiding their decisions, feel relieved of any moral responsibility for choices made in these narratives. This is because player action is driven and can be explained by a factor other than their internal beliefs. In The Walking Dead, a minimalist morality system with no clear categorization or consequence keeps responsibility in the player’s hands. Systems may still track player choices and make them instrumental to the progression of the story. However, minimalist systems do little to display or indicate to the player the value of their decisions and how they will impact the narrative, which feels more realistic.

The consequences of your actions are ambiguous.

Choices made are more satisfying when the player understands or feels that they have been intrinsically motivated, and are the result of their own agency unpolluted by other incentives.

The Witcher 3 also succeeds in unpredictably imbuing morality into the seemingly mundane scenarios that occur in its world. Aside from major quest lines that also pose variable, complicated moral decisions, the decisions the player makes through Geralt’s ordinary day of work reach a sobering, disarming level of emotional realism. Geralt constantly runs across merchants, beggars, looters, and all sorts of unsavory characters throughout the game world. More often than not, the player must decide upon whether or not to intervene, and how to resolve conflicts upon entrance. For instance, consider this example: a townsman asks me to find his missing child in the woods. Here, I have the opportunity to haggle for more pay beyond my standard fares, even though it is evident that he holds very little of value in his hut of sticks and mud. I eventually discover the son’s bones, leftover by wolves. Upon return, I am presented with two more difficult decisions. I can choose to lie about his son’s fate or to tell him the truth, which is a subjective moral quandary I will not pursue here. Either way, he refuses to pay me because I have produced no evidence, while realistically he is likely disheartened by his loss and has no money anyways. At this point, I can choose to “Witcher” mind-trick him into paying me, take it by force, or leave him in his grief.

In The Witcher 3, dialogue options have no clear hierarchy or consequences.

Even if I choose to be “evil” and force him into paying me, I will be receiving so little money that it would be insignificant, and my “evil” deed will not be sufficiently justified by the economic gains. The difference between such economic decisions in The Witcher 3 and BioShock is that, while they are both tied to “bad” morality, BioShock’s immediate rewards and short-term gain rationalize the decision. Here, the economic rewards are so blatantly insignificant that the only rationale behind such a deed most likely stems from the player’s indifference to this NPC’s plight. Therefore, The Witcher 3 is more likely to provoke cognitive dissonance because morally “bad” decisions can not be rationalized or justified by any other incentives.

I will admit that I opted to mind-trick him for his money, as a spur-of-the-moment decision. I took his handful of coins and left him to grieve for his son. What is remarkable is that nothing guided me to make such a morally questionable decision. Money mattered little to me, so it must have been a matter of pride: desiring some acknowledgment for the completion of work. I would like to think of myself as a good person, and I always aspire to do so in video games. Yet, no substantial financial, mechanical, or other extrinsic factor possessed me to exploit the man. The worst part is, I got away with it, and I have to live with this decision throughout the rest of my playthrough, not to mention the chance that I may see that man again. At this moment, I felt like a bad person, and chose to live with this discomfort.

Some moral choices in The Witcher 3 must be made within a time limit.

This side quest alone presents at least three moral choices that work. They work because The Witcher 3 holds no formal morality system, which means none of your actions is omnisciently tracked or denoted by the HUD. More importantly, the consequences/punishments are unpredictable and change depending on context. My interactions with the desperate townsman above may be repeated in different scenarios and stories with different effects. I found these numerous little scenarios to be the most effective because the game appeared to be indifferent to my choices. The Witcher 3’s world of vice and monsters holds no definitive criteria to define good and evil actions, and therefore does little to mechanically address them, such as through on-screen notifications. This places all responsibility upon the player to (1) determine what is right and wrong based on his own beliefs and (2) deal with the consequences (e.g. guilt) of his own accord. Beyond crimes committed in the city, the game realistically grants you the freedom to be both the hero and the dick without formal judgment beyond your own self-evaluation and the unpredictable reactions of narrative agents. This is not to say that the game holds no morality at all, but that it does not commit to an objective, explicitly defined moral binary. The moral universe is then determined not by the game itself, but by the agents, such as NPC characters, within it that interact with the player and present their own diverse moral beliefs.

Self-contained moments in other video games also succeed in provoking realistic moral quandaries. For instance, Red Dead Redemption: Undead Nightmare has a side quest in which you hunt for a monster that is terrorizing country folk. You find that it is a peaceful sasquatch, the last of its kind. You must choose between killing it to satisfy the bounty and, in a sense, end its loneliness, or to leave it to live and die alone in solitude. Here, there is no clear good or bad, even if the choice is still binary. The choice will therefore also have no clear or predictable consequences. You will have to live with this permanent, immutable choice for the rest of the game, as the game itself will be indifferent to your decision.

The Sasquatch Encounter in Red Dead Redemption: Undead Nightmare.

Games like The Walking Dead and The Witcher 3 capture an essential component of moral decision-making: internal conflict. One’s cognitive dissonance is most active when these moral decisions have no extrinsic explanation or justification. Rather, the quandary is found within, an internal conflict propelled by self-evaluation. Discrete morality systems, such as the prominent binary system, may actually detract from the emotional impact of moral decision-making because it so readily and easily provides players with an extrinsic justification for their behaviors. By turning morality into an explicit meta-game, designers may unintentionally displace the player’s responsibility for their own actions and hinder the effects of cognitive dissonance in moral decision-making. Minimalist game design for moral decision-making better matches the moral experiences of ordinary life. Should I steal a cookie from the cookie jar? No one will know. The lines between good and bad are realistically blurred, because there exists no omniscient authority (unless you count your conscience) to denote and tally you on all the karmic decisions you have made in a day. At the end of the day, moral experiences in video games should not be determined by karma meters and reward systems.